深度学习神经网络特征提取(四)

Inception模型是谷歌提出的GoogLeNet网络中的主干特征提取网络。从Inceptionv1到Xception,模型的结构不断的改进,本文针对其中较为经典的Inceptionv3和Xception进行介绍。

Inceptionv3模型

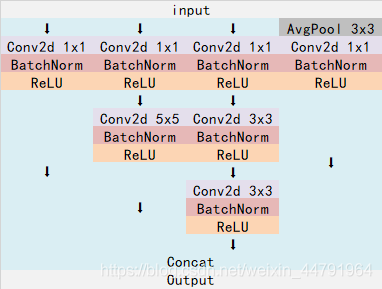

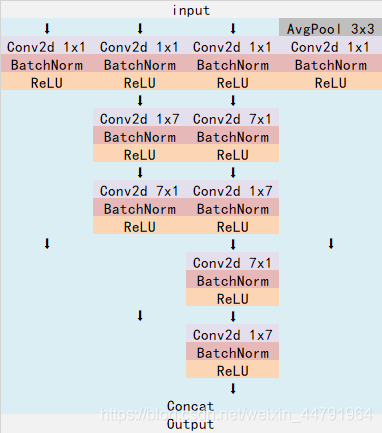

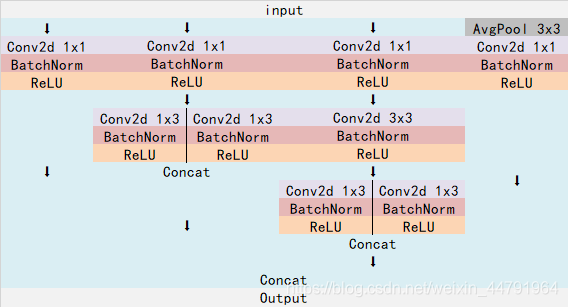

Inceptionv3模型相比于其他模型创新在于使用了四个并行分支,且每个分支采用的卷积核大小不同,使得存在不同的感受野,最后在进行特征融合,得到不同尺度的特征。其主要包括三个部分:block1、block2、block3,由这些部分线性连接组成了Inceptionv3(其中层数可能会有一些改变,但是总体结构一样)。

block1中四个分支分别为不同卷积核大小的卷积层。

block2中四个分支中将原始的卷积操作转换成横向和纵向结合的卷积操作,通过这样操作可以减少参数量。

block3的卷积形式和block2的卷积形式相同,只是其中组合的卷积核大小不同。

代码如下:

#-------------------------------------------------------------#

# InceptionV3的网络部分

#-------------------------------------------------------------#

def conv2d_bn(x, filters, num_row, num_col, padding='same', strides=(1, 1), name=None):

x = Conv2D(filters, (num_row, num_col), strides=strides, padding=padding, use_bias=False)(x)

x = BatchNormalization(scale=False)(x)

x = Activation('relu')(x)

return x

def InceptionV3(input_shape=[299,299,3], classes=1000):

img_input = Input(shape=input_shape)

# 299x299 -> 149x149

x = conv2d_bn(img_input, 32, 3, 3, strides=(2, 2), padding='valid')

# 149x149 -> 147x147

x = conv2d_bn(x, 32, 3, 3, padding='valid')

# 147x147 -> 147x147

x = conv2d_bn(x, 64, 3, 3)

# 147x147 -> 73x73

x = MaxPooling2D((3, 3), strides=(2, 2))(x)

# 73x73 -> 73x73

x = conv2d_bn(x, 80, 1, 1, padding='valid')

# 73x73 -> 71x71

x = conv2d_bn(x, 192, 3, 3, padding='valid')

# 71x71 -> 35x35

x = MaxPooling2D((3, 3), strides=(2, 2))(x)

#--------------------------------#

# Block1 35x35

#--------------------------------#

# Block1 part1

# 35 x 35 x 192 -> 35 x 35 x 256

branch1x1 = conv2d_bn(x, 64, 1, 1)#第一分支

branch5x5 = conv2d_bn(x, 48, 1, 1)# 第二分支

branch5x5 = conv2d_bn(branch5x5, 64, 5, 5)

branch3x3dbl = conv2d_bn(x, 64, 1, 1)#第三分支

branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)

branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)

branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)#第四分支

branch_pool = conv2d_bn(branch_pool, 32, 1, 1)

x = layers.concatenate([branch1x1, branch5x5, branch3x3dbl, branch_pool], axis=3, name='mixed0')

# Block1 part2

# 35 x 35 x 256 -> 35 x 35 x 288

branch1x1 = conv2d_bn(x, 64, 1, 1)

branch5x5 = conv2d_bn(x, 48, 1, 1)

branch5x5 = conv2d_bn(branch5x5, 64, 5, 5)

branch3x3dbl = conv2d_bn(x, 64, 1, 1)

branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)

branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)

branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)

branch_pool = conv2d_bn(branch_pool, 64, 1, 1)

x = layers.concatenate([branch1x1, branch5x5, branch3x3dbl, branch_pool], axis=3, name='mixed1')

# Block1 part3

# 35 x 35 x 288 -> 35 x 35 x 288

branch1x1 = conv2d_bn(x, 64, 1, 1)

branch5x5 = conv2d_bn(x, 48, 1, 1)

branch5x5 = conv2d_bn(branch5x5, 64, 5, 5)

branch3x3dbl = conv2d_bn(x, 64, 1, 1)

branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)

branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)

branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)

branch_pool = conv2d_bn(branch_pool, 64, 1, 1)

x = layers.concatenate([branch1x1, branch5x5, branch3x3dbl, branch_pool], axis=3, name='mixed2')

#--------------------------------#

# Block2 17x17

#--------------------------------#

# Block2 part1

# 35 x 35 x 288 -> 17 x 17 x 768

branch3x3 = conv2d_bn(x, 384, 3, 3, strides=(2, 2), padding='valid')

branch3x3dbl = conv2d_bn(x, 64, 1, 1)

branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)

branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3, strides=(2, 2), padding='valid')

branch_pool = MaxPooling2D((3, 3), strides=(2, 2))(x)

x = layers.concatenate([branch3x3, branch3x3dbl, branch_pool], axis=3, name='mixed3')

# Block2 part2

# 17 x 17 x 768 -> 17 x 17 x 768

branch1x1 = conv2d_bn(x, 192, 1, 1)

branch7x7 = conv2d_bn(x, 128, 1, 1)

branch7x7 = conv2d_bn(branch7x7, 128, 1, 7)

branch7x7 = conv2d_bn(branch7x7, 192, 7, 1)

branch7x7dbl = conv2d_bn(x, 128, 1, 1)

branch7x7dbl = conv2d_bn(branch7x7dbl, 128, 7, 1)

branch7x7dbl = conv2d_bn(branch7x7dbl, 128, 1, 7)

branch7x7dbl = conv2d_bn(branch7x7dbl, 128, 7, 1)

branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 1, 7)

branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)

branch_pool = conv2d_bn(branch_pool, 192, 1, 1)

x = layers.concatenate([branch1x1, branch7x7, branch7x7dbl, branch_pool], axis=3, name='mixed4')

# Block2 part3 and part4

# 17 x 17 x 768 -> 17 x 17 x 768 -> 17 x 17 x 768

for i in range(2):

branch1x1 = conv2d_bn(x, 192, 1, 1)

branch7x7 = conv2d_bn(x, 160, 1, 1)

branch7x7 = conv2d_bn(branch7x7, 160, 1, 7)

branch7x7 = conv2d_bn(branch7x7, 192, 7, 1)

branch7x7dbl = conv2d_bn(x, 160, 1, 1)

branch7x7dbl = conv2d_bn(branch7x7dbl, 160, 7, 1)

branch7x7dbl = conv2d_bn(branch7x7dbl, 160, 1, 7)

branch7x7dbl = conv2d_bn(branch7x7dbl, 160, 7, 1)

branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 1, 7)

branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)

branch_pool = conv2d_bn(branch_pool, 192, 1, 1)

x = layers.concatenate([branch1x1, branch7x7, branch7x7dbl, branch_pool], axis=3, name='mixed' + str(5 + i))

# Block2 part5

# 17 x 17 x 768 -> 17 x 17 x 768

branch1x1 = conv2d_bn(x, 192, 1, 1)

branch7x7 = conv2d_bn(x, 192, 1, 1)

branch7x7 = conv2d_bn(branch7x7, 192, 1, 7)

branch7x7 = conv2d_bn(branch7x7, 192, 7, 1)

branch7x7dbl = conv2d_bn(x, 192, 1, 1)

branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 7, 1)

branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 1, 7)

branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 7, 1)

branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 1, 7)

branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)

branch_pool = conv2d_bn(branch_pool, 192, 1, 1)

x = layers.concatenate([branch1x1, branch7x7, branch7x7dbl, branch_pool], axis=3, name='mixed7')

#--------------------------------#

# Block3 8x8

#--------------------------------#

# Block3 part1

# 17 x 17 x 768 -> 8 x 8 x 1280

branch3x3 = conv2d_bn(x, 192, 1, 1)

branch3x3 = conv2d_bn(branch3x3, 320, 3, 3, strides=(2, 2), padding='valid')

branch7x7x3 = conv2d_bn(x, 192, 1, 1)

branch7x7x3 = conv2d_bn(branch7x7x3, 192, 1, 7)

branch7x7x3 = conv2d_bn(branch7x7x3, 192, 7, 1)

branch7x7x3 = conv2d_bn(branch7x7x3, 192, 3, 3, strides=(2, 2), padding='valid')

branch_pool = MaxPooling2D((3, 3), strides=(2, 2))(x)

x = layers.concatenate([branch3x3, branch7x7x3, branch_pool], axis=3, name='mixed8')

# Block3 part2 part3

# 8 x 8 x 1280 -> 8 x 8 x 2048 -> 8 x 8 x 2048

for i in range(2):

branch1x1 = conv2d_bn(x, 320, 1, 1)

branch3x3 = conv2d_bn(x, 384, 1, 1)

branch3x3_1 = conv2d_bn(branch3x3, 384, 1, 3)

branch3x3_2 = conv2d_bn(branch3x3, 384, 3, 1)

branch3x3 = layers.concatenate([branch3x3_1, branch3x3_2], axis=3, name='mixed9_' + str(i))

branch3x3dbl = conv2d_bn(x, 448, 1, 1)

branch3x3dbl = conv2d_bn(branch3x3dbl, 384, 3, 3)

branch3x3dbl_1 = conv2d_bn(branch3x3dbl, 384, 1, 3)

branch3x3dbl_2 = conv2d_bn(branch3x3dbl, 384, 3, 1)

branch3x3dbl = layers.concatenate([branch3x3dbl_1, branch3x3dbl_2], axis=3)

branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)

branch_pool = conv2d_bn(branch_pool, 192, 1, 1)

x = layers.concatenate([branch1x1, branch3x3, branch3x3dbl, branch_pool], axis=3, name='mixed' + str(9 + i))

# 平均池化后全连接。

x = GlobalAveragePooling2D(name='avg_pool')(x)

x = Dense(classes, activation='softmax', name='predictions')(x)

inputs = img_input

model = Model(inputs, x, name='inception_v3')

return modelXception模型

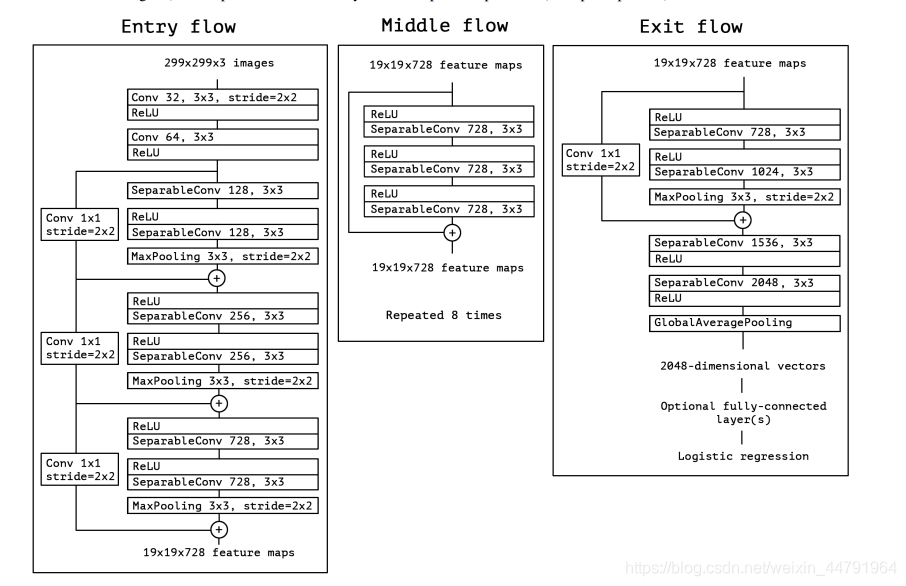

Xception是在Inceptionv3的基础上改进而来的,主要的改进在原来的多尺寸卷积,使用了深度可分离卷积进行替换,关于深度可分离卷积在之前的MobileNet文章中已经介绍,所以我们直接来看Xception的网络结构吧。

Xception的结构和Inception结构类似,同样也分为三个部分:entry flow、middle flow、exit flow。总共包含14个block,其中entry flow有4个,middle flow有重复8次即8个block,exit flow有两个。

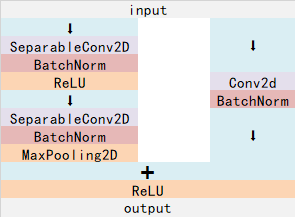

其中entry flow和exit flow中的block结构如下图所示:

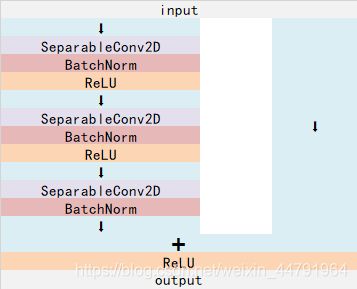

middle flow的block结构如下图所示:

如果对于之前的ResNet已经有学习过,相信你已经对这个结构游刃有余了,所以直接来看代码吧。

代码如下

#-------------------------------------------------------------#

# Xception的网络部分

#-------------------------------------------------------------#

def Xception(input_shape = [299,299,3],classes=1000):

img_input = Input(shape=input_shape)

#--------------------------#

# Entry flow

#--------------------------#

#--------------------#

# block1

#--------------------#

# 299,299,3 -> 149,149,64

x = Conv2D(32, (3, 3), strides=(2, 2), use_bias=False, name='block1_conv1')(img_input)

x = BatchNormalization(name='block1_conv1_bn')(x)

x = Activation('relu', name='block1_conv1_act')(x)

x = Conv2D(64, (3, 3), use_bias=False, name='block1_conv2')(x)

x = BatchNormalization(name='block1_conv2_bn')(x)

x = Activation('relu', name='block1_conv2_act')(x)

#--------------------#

# block2

#--------------------#

# 149,149,64 -> 75,75,128

residual = Conv2D(128, (1, 1), strides=(2, 2), padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = SeparableConv2D(128, (3, 3), padding='same', use_bias=False, name='block2_sepconv1')(x)

x = BatchNormalization(name='block2_sepconv1_bn')(x)

x = Activation('relu', name='block2_sepconv2_act')(x)

x = SeparableConv2D(128, (3, 3), padding='same', use_bias=False, name='block2_sepconv2')(x)

x = BatchNormalization(name='block2_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block2_pool')(x)

x = layers.add([x, residual])

#--------------------#

# block3

#--------------------#

# 75,75,128 -> 38,38,256

residual = Conv2D(256, (1, 1), strides=(2, 2),padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = Activation('relu', name='block3_sepconv1_act')(x)

x = SeparableConv2D(256, (3, 3), padding='same', use_bias=False, name='block3_sepconv1')(x)

x = BatchNormalization(name='block3_sepconv1_bn')(x)

x = Activation('relu', name='block3_sepconv2_act')(x)

x = SeparableConv2D(256, (3, 3), padding='same', use_bias=False, name='block3_sepconv2')(x)

x = BatchNormalization(name='block3_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block3_pool')(x)

x = layers.add([x, residual])

#--------------------#

# block4

#--------------------#

# 38,38,256 -> 19,19,728

residual = Conv2D(728, (1, 1), strides=(2, 2),padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = Activation('relu', name='block4_sepconv1_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name='block4_sepconv1')(x)

x = BatchNormalization(name='block4_sepconv1_bn')(x)

x = Activation('relu', name='block4_sepconv2_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name='block4_sepconv2')(x)

x = BatchNormalization(name='block4_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block4_pool')(x)

x = layers.add([x, residual])

#--------------------------#

# Middle flow

#--------------------------#

#--------------------#

# block5--block12

#--------------------#

# 19,19,728 -> 19,19,728

for i in range(8):

residual = x

prefix = 'block' + str(i + 5)

x = Activation('relu', name=prefix + '_sepconv1_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name=prefix + '_sepconv1')(x)

x = BatchNormalization(name=prefix + '_sepconv1_bn')(x)

x = Activation('relu', name=prefix + '_sepconv2_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name=prefix + '_sepconv2')(x)

x = BatchNormalization(name=prefix + '_sepconv2_bn')(x)

x = Activation('relu', name=prefix + '_sepconv3_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name=prefix + '_sepconv3')(x)

x = BatchNormalization(name=prefix + '_sepconv3_bn')(x)

x = layers.add([x, residual])

#--------------------------#

# Exit flow

#--------------------------#

#--------------------#

# block13

#--------------------#

# 19,19,728 -> 10,10,1024

residual = Conv2D(1024, (1, 1), strides=(2, 2),

padding='same', use_bias=False)(x)

residual = BatchNormalization()(residual)

x = Activation('relu', name='block13_sepconv1_act')(x)

x = SeparableConv2D(728, (3, 3), padding='same', use_bias=False, name='block13_sepconv1')(x)

x = BatchNormalization(name='block13_sepconv1_bn')(x)

x = Activation('relu', name='block13_sepconv2_act')(x)

x = SeparableConv2D(1024, (3, 3), padding='same', use_bias=False, name='block13_sepconv2')(x)

x = BatchNormalization(name='block13_sepconv2_bn')(x)

x = MaxPooling2D((3, 3), strides=(2, 2), padding='same', name='block13_pool')(x)

x = layers.add([x, residual])

#--------------------#

# block14

#--------------------#

# 10,10,1024 -> 10,10,2048

x = SeparableConv2D(1536, (3, 3), padding='same', use_bias=False, name='block14_sepconv1')(x)

x = BatchNormalization(name='block14_sepconv1_bn')(x)

x = Activation('relu', name='block14_sepconv1_act')(x)

x = SeparableConv2D(2048, (3, 3), padding='same', use_bias=False, name='block14_sepconv2')(x)

x = BatchNormalization(name='block14_sepconv2_bn')(x)

x = Activation('relu', name='block14_sepconv2_act')(x)

x = GlobalAveragePooling2D(name='avg_pool')(x)

x = Dense(classes, activation='softmax', name='predictions')(x)

inputs = img_input

model = Model(inputs, x, name='xception')

return model